In elementary school math, getting the right answer wasn’t enough. Teachers wanted to see the steps you took to get there.

This basic rule matters more than ever as AI takes on an increasingly larger role in real-world decision-making. AI can be very useful because it can sort through information much faster than traditional methods. But if a recommendation arrives without an explanation, users are being asked to take a leap.

How black boxes happen

The tech industry often refers to opaque AI as a “black box.” Data goes in and an answer comes out, while the process inside is hidden.

This happens because many modern AI models don’t follow a simple list of human-written rules. They’re trained on data and learning patterns that don’t always translate neatly into plain language. The final output might look definitive, but the path the model took to reach it can be difficult to explain.

In low-stakes situations, a black-box result may not matter much. If a music app suggests a terrible song, you just skip it. But when an AI recommendation carries real consequences, the missing explanation becomes much harder to accept.

Fortunately, total opacity isn’t required and more developers are baking transparency into their products. AI tools can be designed to make their results easier to inspect, giving users a practical way to evaluate an answer before they act on it.

Why a target needs a reason

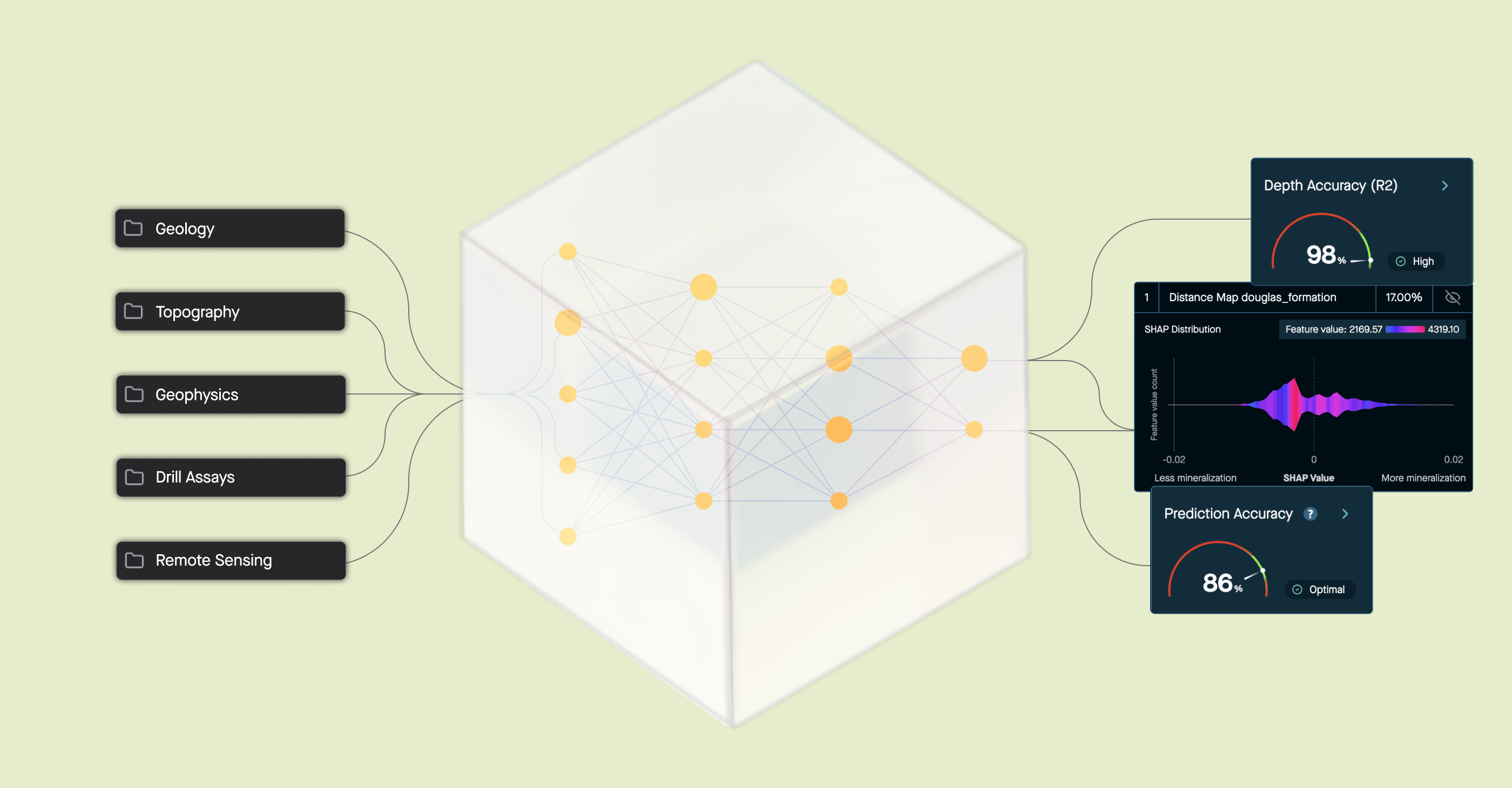

Mineral exploration proves why this visibility matters. Before drilling for metals or minerals, exploration teams analyze geoscientific data to determine where deposits likely exist underground. The work is often slow and difficult, and the cost of getting it wrong can be high. AI can help by drawing connections across large datasets to surface areas with strong mineral potential.

But not all targets are equal, and a highlighted area doesn’t explain itself. One flagged zone might reflect unusual metal values in soil samples along a fault, which can be a geologically meaningful signal of a real deposit. Another might rank highly just because of a magnetic anomaly from a rock type that has nothing to do with the deposit being sought. A system that treats both results the same way isn’t very helpful.

DORA, VRIFY’s AI prospectivity mapping software, is built to make this distinction clear. When the system highlights a target, it shows which specific data layers drove the result. If the model appears to be responding to a misleading signal rather than meaningful geology, geoscientists can adjust the inputs using their field knowledge and run the analysis again to refine the targets.

This transparency turns a promising area on a map into a decision the team can stand behind.

Earned trust

Signing off on an answer isn’t the same as making a decision. When AI produces a result without explaining how it got there, the person using it has little basis for judgment. They can go along with it or walk away, but that’s not a good position to be in when the stakes are real.